Workflow

- Organizers release the training dataset and parsing/evaluation tools: upon request under usage agreement. Interest for participation remains open till the deadline of the submission of models’ outputs. The participants are free to use the training dataset for training and tune their algorithms as necessary and may also compute the benchmark scores as a reference for themselves.

- When the participants have decided to go ahead with the algorithm submission, they need to submit the binaries to the organizers so that we may evaluate the performance of the algorithms. The binaries should:

- Track 1) Take as input an image and provide a saliency map (saliency models) or scanpath (saccadic model).

- Track 2) Take as input an image and the corresponding scanpath from one observer, and it should classify the observer (ASD or TD).

- The organizers will then release the evaluation dataset (content only and totally different to the training dataset), so the proponent can submit the outputs of his/her model in the defined format. The output of model on evaluation dataset must be submitted online before the stated deadline.

- The organizers will compute the performances of the submitted models (with respect of eye-tracking data) and release them to the proponent, so that the proponent can report the results in publications and presentations.

Evaluation Criteria

- The saliency maps generated by the candidate’s algorithm will be compared with the ground-truth saliency maps using corresponding metrics (e.g., Normalised Scanpath Saliency (NSS), the Area under the curve (AUC) and the KL-Divergence , etc.).

- When the users submit a scan-path algorithm, the metric used will be the Vectors Similarity for scan-path comparison along with a final likelihood score.

- For classification models, accuracy, sensitivity, specificity, etc. will be computed. AUC analysis can be considered if the models provide probability values.

- A proponent can submit a maximum of three models per category (saliency/saccadic, track1 and 2).

- After the end of the Grand Challenge, the tools for computation of benchmark scores will be made available online for free, for easy use by the research community.

- Prizes will be offered in all tracks: saliency and saccadic model in track 1 and track 2.

- Connection details for submission will be sent to interested parties/researchers. Please contact saliency4ASD@ls2n.fr

Paper submission

Paper submission to ICME’19 is optional. If you want to submit a paper, please follow the following instructions:

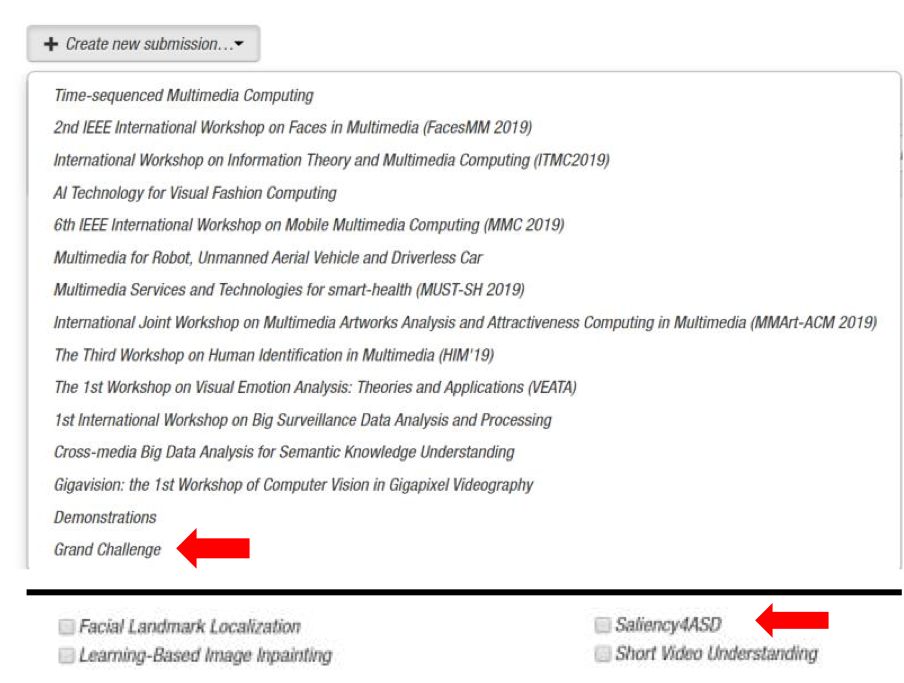

- Submission site: https://cmt3.research.microsoft.com/ICME2019W/

- After logging into the system, select the “Grand Challenge” at the bottom of the list.

- Then select the subarea: “saliency4ASD”